The Chaos of Manual Deployments

Six months ago, my team’s deployment process felt like a high-stakes game of Jenga. Every update required a developer to manually build the project, ZIP the files, and pray while uploading them via FTP. It was slow, error-prone, and a soul-crushing waste of engineering time.

We frequently hit ‘hotfix cycles’ because a single forgotten configuration file caused a production outage. Automating this wasn’t just a technical upgrade; it was survival. Scalability only starts when you stop touching the production server with your hands.

The Breaking Point: Why Our Deployments Failed

After auditing our failures, I found three culprits common to most growing IT teams. First was ‘environmental drift.’ Production had become a black box. Manual tweaks meant the live environment no longer matched staging, making tests useless.

Next came the ‘artifact mismatch.’ One developer might use .NET SDK 8.0.1 while another used 8.0.4. These tiny version gaps created inconsistent binaries that worked on one machine but crashed in the cloud. Finally, security was a nightmare. Sharing FTP credentials or publish profiles across the team created a massive, unmanaged attack surface. We needed a system that enforced consistency and locked down access.

Choosing the Right Tools

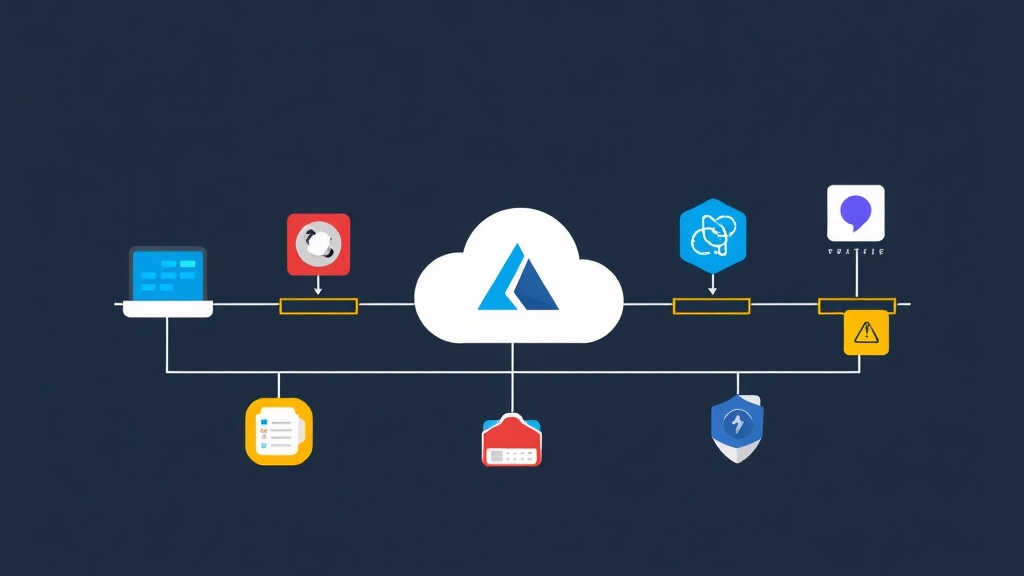

We didn’t pick Azure DevOps blindly. Jenkins was our first thought, but we didn’t want the tax of managing servers and patching endless plugins. GitHub Actions was a close second since our code lived there. However, since our infrastructure was already deep in the Azure ecosystem, Azure DevOps offered the path of least resistance.

It provides native integration that is tough to ignore. Service Connections handle authentication via Service Principals, meaning we haven’t touched a password or a publish profile in half a year. For an Azure-centric stack, the flow between Repos, Pipelines, and App Service is seamless. It cuts out the ‘glue code’ that usually breaks in other tools.

The Blueprint: Multi-Stage YAML Pipelines

My current strategy centers on multi-stage YAML pipelines. By treating the deployment as ‘Pipeline as Code,’ we version-control our release logic just like our application code. This ensures every change is peer-reviewed and audited.

Step 1: The Secure Connection

Your foundation is the Service Connection. This acts as the secure bridge to your Azure Subscription. I always use ‘Service Principal (automatic).’ It generates an App Registration in Microsoft Entra ID with scoped Contributor access. It’s significantly more secure than using individual dev accounts.

Step 2: The CI Logic (Building the Artifact)

The first stage is Continuous Integration. We need to know the code compiles and passes tests before it goes anywhere. Here is the streamlined YAML I use for our .NET services, which consistently finishes in under 5 minutes:

trigger:

- main

stages:

- stage: Build

displayName: 'Build and Package'

jobs:

- job: Build

pool:

vmImage: 'ubuntu-latest'

steps:

- task: DotNetCoreCLI@2

inputs:

command: 'publish'

publishWebProjects: true

arguments: '--configuration Release --output $(Build.ArtifactStagingDirectory)'

zipAfterPublish: true

- task: PublishBuildArtifacts@1

inputs:

PathtoPublish: '$(Build.ArtifactStagingDirectory)'

ArtifactName: 'web-app'Step 3: The CD Phase (Shipping to Production)

Once the artifact is verified, we trigger Continuous Deployment. I use ‘Environments’ in Azure DevOps to track exactly what version is live. The ‘runOnce’ strategy works perfectly for 90% of standard web applications.

- stage: Deploy

displayName: 'Deploy to Production'

dependsOn: Build

condition: succeeded()

jobs:

- deployment: Deploy

environment: 'production'

pool:

vmImage: 'ubuntu-latest'

strategy:

runOnce:

deploy:

steps:

- task: AzureWebApp@1

inputs:

azureSubscription: 'My-Azure-Service-Connection'

appType: 'webAppLinux'

appName: 'itfromzero-prod-app'

package: '$(Pipeline.Workspace)/web-app/**/*.zip'Hardening the Pipeline for Production

Deploying code is only half the battle. Over the last few months, we’ve integrated Deployment Slots and Variable Groups to eliminate risk.

Slots let us push to ‘staging’ first. We run smoke tests on the actual Azure hardware without bothering a single user. Once validated, we perform an ‘instant swap.’ This results in zero downtime and zero stress.

We also linked Variable Groups to Azure Key Vault. This keeps sensitive secrets, like database strings, out of our YAML files. Separating configuration from code is non-negotiable for security compliance.

The 6-Month Verdict

The results were immediate. Our deployment frequency jumped from once every two weeks to 4-5 times per day. We no longer fear Fridays. The ‘it works on my machine’ excuse is dead because every build happens on a clean, hosted agent.

If I were starting today, I’d set this up on day one. The initial three-hour investment in YAML paid for itself in the first week of automated releases. If you work in the Microsoft ecosystem, mastering this pipeline is the fastest way to stop being a firefighter and start being a builder.