Breaking the Virtualization Ceiling

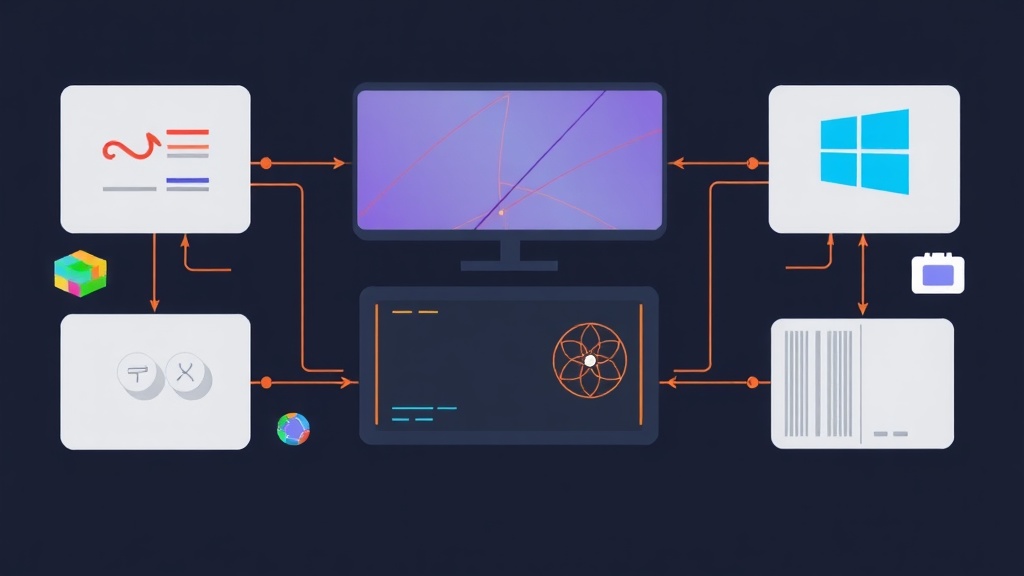

Virtualizing a basic Linux web server is a breeze. However, everything changes when you try to run a high-end Windows gaming rig or a CUDA-heavy AI instance inside Proxmox. Standard virtual drivers like VirtIO are great for 2D desktops, but they crumble when rendering 144Hz frames in Cyberpunk 2077 or training a 7-billion parameter Llama-3 model. You simply cannot bridge that gap with software emulation.

GPU Passthrough is the fix. This technique gives a specific VM total control over your physical PCIe graphics card. The guest OS interacts with the hardware directly, bypasses the hypervisor, and hits roughly 96% to 99% of native bare-metal performance. I have run this setup for over six months for remote Stable Diffusion rendering. While the initial setup is tedious, the results are indistinguishable from a physical PC.

Core Concepts: IOMMU and VFIO

You need to master two technologies before jumping into the command line: IOMMU and VFIO.

IOMMU (Input-Output Memory Management Unit) acts as a hardware-level traffic controller. Known as Intel VT-d or AMD-Vi, it allows a VM to access physical memory addresses safely. Without this, the CPU cannot isolate the GPU’s memory from the rest of the host system.

VFIO (Virtual Function I/O) is a Linux kernel driver that serves as a ‘placeholder.’ It stops the Proxmox host from loading its own drivers, like NVIDIA or Nouveau, onto the card. This keeps the GPU in a clean, ‘waiting’ state. If the host grabs the card first, your VM will likely hang or crash during boot.

Step 1: Preparing the Hardware and BIOS

Success depends heavily on your motherboard’s IOMMU grouping. For a smooth experience, the GPU should sit in its own isolated group. If it shares a group with your SATA controller, passing the GPU might accidentally disconnect your hard drives.

- Reboot into your BIOS/UEFI.

- Enable VT-d (Intel) or AMD-Vi / IOMMU (AMD).

- Enable SR-IOV if the option exists.

- Disable CSM (Compatibility Support Module); UEFI is required for modern passthrough.

- Set the Primary Display to iGPU (Internal Graphics). This leaves your discrete GPU free for the VM.

Step 2: Enabling IOMMU in the Kernel

We must tell Proxmox to activate IOMMU at the very start of the boot process. Open your GRUB configuration file:

nano /etc/default/grubLook for the line GRUB_CMDLINE_LINUX_DEFAULT. Add the following parameters based on your processor type:

For Intel (10th Gen to 14th Gen):

GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt"For AMD (Ryzen series):

GRUB_CMDLINE_LINUX_DEFAULT="quiet amd_iommu=on iommu=pt"The iommu=pt flag is vital. it prevents the kernel from touching devices it doesn’t need, which boosts performance. Save the file, update GRUB, and reboot:

update-grub

rebootVerify it worked by running dmesg | grep -e DMAR -e IOMMU. If you see “IOMMU enabled,” you are on the right track.

Step 3: Isolating the GPU via VFIO

Now we need to identify the hardware IDs of your GPU. Run the following command:

lspci -nn | grep -i nvidiaYou will see a pair of IDs, usually looking like this:

01:00.0 VGA compatible controller [0300]: NVIDIA Corporation [10de:2484] (rev a1)

01:00.1 Audio device [0403]: NVIDIA Corporation [10de:228b] (rev a1)Note the IDs 10de:2484 and 10de:228b. Create a new configuration file to bind these IDs to the VFIO driver:

nano /etc/modprobe.d/vfio.confAdd this line using your specific IDs:

options vfio-pci ids=10de:2484,10de:228b disable_vga=1Finally, we must blacklist the host drivers to prevent them from interfering. Create /etc/modprobe.d/pve-blacklist.conf and add:

blacklist nvidia

blacklist nvidiafb

blacklist nouveau

blacklist radeon

blacklist amdgpuRun update-initramfs -u and reboot one last time.

Step 4: Configuring the Proxmox VM

When you build your VM, specific settings are mandatory for hardware acceleration. Don’t use the default settings or the GPU won’t initialize.

- System: Set Machine to q35 and BIOS to OVMF (UEFI). Add an EFI disk.

- Display: Set this to none. We want the GPU to handle all output.

- CPU: Change the Type to host. This allows the VM to use AVX instructions and specialized cores required for gaming and AI.

Go to the Hardware tab, click Add -> PCI Device. Choose your GPU (the .0 entry). Ensure All Functions, Primary GPU, and PCI-Express are all checked. If you miss one, you might get a black screen.

Step 5: Real-World Performance and Optimization

For Windows users, NVIDIA used to trigger “Error 43” when it detected a VM. While newer drivers are more lenient, you should still hide the hypervisor. Edit your VM config file at /etc/pve/qemu-server/[VM_ID].conf and modify the CPU line:

cpu: host,hv_vendor_id=proxmox,hidden=1After six months of testing, I’ve found that network choice matters more than raw GPU power for remote play. Skip Windows Remote Desktop (RDP) for gaming; it uses a software renderer that ignores your GPU. Use Parsec or Sunshine/Moonlight instead. On a local 1Gbps network, I see latencies as low as 2ms to 5ms, making it feel like the server is under my desk.

Final Thoughts

GPU Passthrough turns a standard Proxmox node into a powerhouse that can handle anything from 4K gaming to training LLMs. While it requires navigating BIOS menus and kernel parameters, the flexibility is unmatched. You can spin up a gaming VM in the evening and a dedicated AI workstation in the morning on the same hardware. Keep an eye on your IOMMU groups after any BIOS updates, as manufacturers often change how PCIe lanes are mapped, which can break your configuration.