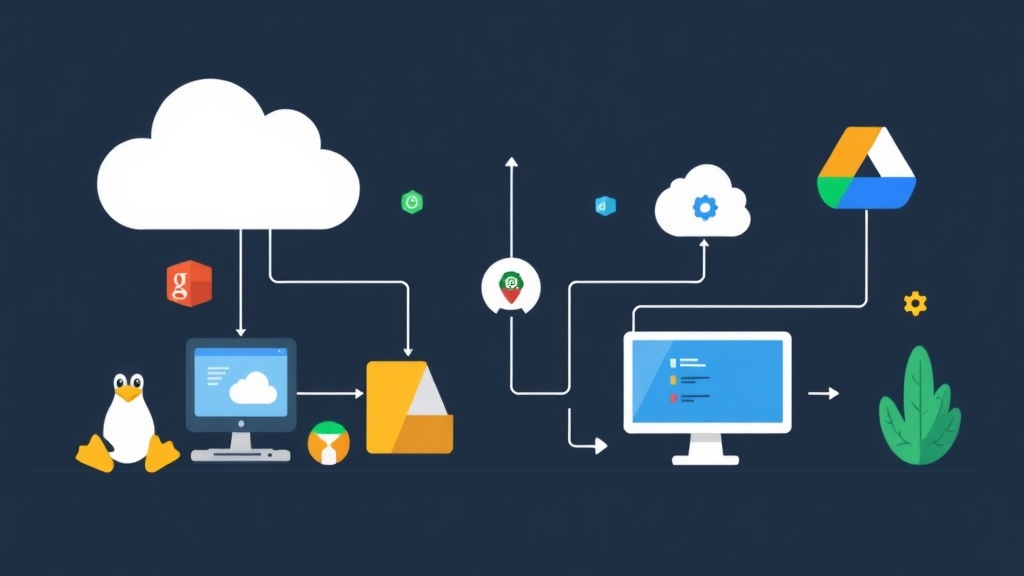

Managing Cloud Storage from the Linux Terminal

Moving files between Google Drive, Amazon S3, or OneDrive usually involves a browser or a resource-heavy desktop client. These aren’t options on a headless Linux server. You need a way to move data without a GUI. Rclone fills this gap perfectly. Often called the “rsync for cloud storage,” it lets you manage files across 70+ providers using standard terminal commands.

Rclone vs. Traditional Cloud Tools

Linux administrators often default to official tools, but Rclone usually offers a better experience for three main reasons.

- Unified Syntax: Tools like

aws-cliorgsutilwork great for their own platforms, but they require learning a new syntax for every provider. Rclone uses the same commands regardless of the backend. - Smart FUSE Mounts: Standard sync clients often force you to download everything to your local disk. Rclone mounts cloud storage as a virtual directory. You only fetch data when you open a file, which is a lifesaver for servers with small SSDs.

- API Abstraction: Writing custom Python scripts for cloud APIs is a maintenance nightmare. Rclone handles the API updates and authentication logic so you don’t have to.

Performance and Trade-offs

Rclone is built for speed, but it requires a different mindset than “set-and-forget” desktop apps.

The Advantages

- Minimal Footprint: In my testing on an Ubuntu 22.04 instance, Rclone used just 18MB of RAM while idling. Compare that to the 200MB+ often consumed by official background sync daemons.

- Local Encryption: The “crypt” overlay ensures your data is encrypted before it leaves your server. Even if your cloud provider is breached, your files remain unreadable.

- Bandwidth Shaping: You can cap speeds using

--bwlimit 10M. This prevents a large backup from saturating your server’s 1Gbps uplink during peak traffic hours.

The Challenges

- Setup Complexity: Configuring OAuth for Google or Microsoft on a remote server requires a “headless” workaround. It takes about 5 minutes longer than a standard login.

- Manual Syncing: Rclone doesn’t watch for file changes by default. To get real-time updates, you must use the

bisynccommand or schedule tasks with systemd.

Recommended Environment

For the best results, use these specifications:

- OS: Ubuntu 22.04 LTS or Debian 12.

- Permissions: A user with

sudoaccess for the initial installation. - Hardware: 1GB of RAM is the sweet spot. While it runs on 512MB, large transfers with high concurrency might trigger OOM (Out of Memory) errors.

- Auth Helper: Keep a desktop computer nearby to handle the browser-based login step.

Implementation Guide: Setting Up Rclone

Step 1: Install the Latest Version

Avoid using apt install rclone, as distribution repositories are often months behind. Use the official script to ensure you have the latest API fixes.

sudo -v && curl https://rclone.org/install.sh | sudo bashCheck your version to confirm the installation succeeded:

rclone versionStep 2: Connect to Google Drive

Rclone stores connections as “remotes.” Start the interactive setup by running:

rclone config- Press

nfor a new remote and name itmy-gdrive. - Select the number for Google Drive from the list.

- Leave the Client ID and Secret blank unless you have created your own Google Cloud project.

- Select scope

1for full access. - When asked about “Auto config,” choose

n. This is vital for SSH sessions.

The Headless Trick: Rclone will give you a command like rclone authorize "drive". Run this on your local laptop. A browser will open for you to log in. Copy the resulting token back into your server terminal to finish the link.

Step 3: Essential Commands

Interacting with the cloud feels just like navigating a local disk.

List your folders:

rclone lsd my-gdrive:Upload a directory:

rclone copy /home/user/data my-gdrive:backups --progressMirror a directory:

Caution: This will delete files in the cloud if they aren’t on your server.

rclone sync /var/www/html my-gdrive:web-mirrorStep 4: Mounting for Local Access

To treat your cloud storage like a plugged-in hard drive, use the mount feature. First, install the FUSE driver:

sudo apt install fuse3 -y

mkdir ~/cloud-data

rclone mount my-gdrive: ~/cloud-data --vfs-cache-mode full &Using --vfs-cache-mode full improves compatibility with apps that expect immediate file writes.

Step 5: Automating Your Backups

Don’t run backups manually. Use a simple script to handle the heavy lifting. I use a variation of this for my production databases:

#!/bin/bash

# rclone-backup.sh

SOURCE="/opt/app/data"

DEST="my-gdrive:backups/$(date +%Y-%m-%d)"

LOG="/var/log/rclone.log"

# Perform the copy with 4 parallel transfers

/usr/bin/rclone copy $SOURCE $DEST --transfers 4 --log-file=$LOG --log-level INFO

# Remove backups older than 30 days to save space

/usr/bin/rclone delete my-gdrive:backups --min-age 30dSchedule this in your crontab (crontab -e) to run every night at 3 AM:

0 3 * * * /home/user/rclone-backup.shOptimizing Performance

If you are moving thousands of small files, Rclone might seem sluggish. This is usually due to API latency. To speed things up, add --transfers 8 and --checkers 16 to your command. This forces Rclone to process more files simultaneously. Always use the --dry-run flag when testing new sync scripts. It lets you see exactly what would be deleted or moved without actually changing your data.