Context & Why: Overcoming Network Bottlenecks and Single Points of Failure

Six months ago, I faced a common challenge on my production Ubuntu 22.04 server with 4GB RAM: how to ensure both high availability and improved network throughput.

A single network interface represented a significant bottleneck, especially during peak load times or large data transfers, such as transferring a 50GB database backup or handling a sudden surge of web traffic. If that one NIC failed, my server would be isolated. Furthermore, a bandwidth-intensive task could easily saturate the link, degrading overall performance.

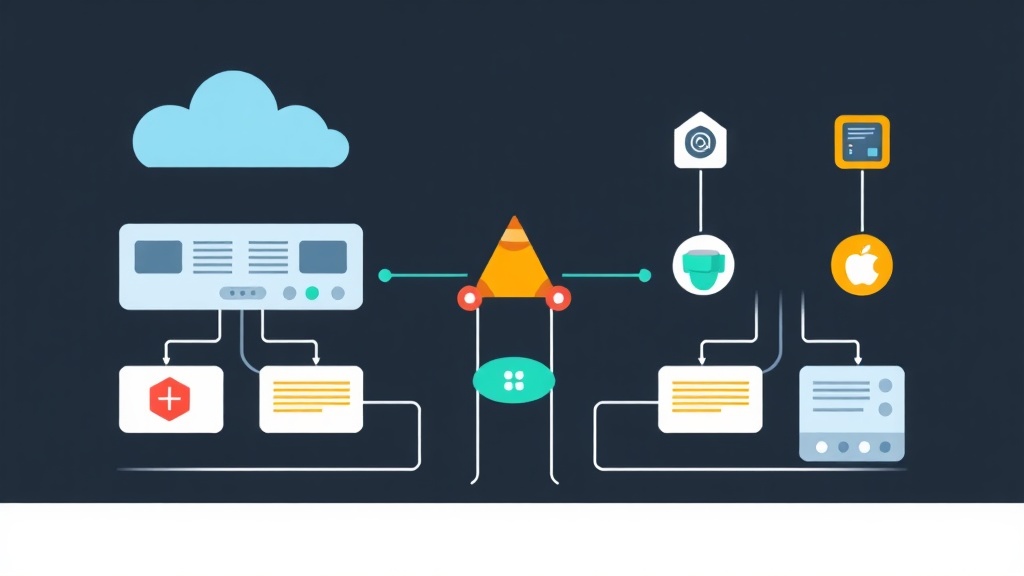

That’s when I decided to implement network bonding, a solution I’d heard about but hadn’t fully tested in a live environment. The concept is simple and effective: combine multiple physical network interfaces into a single logical interface. This offers two primary benefits:

- Increased Throughput (Speed): By distributing traffic across multiple NICs, you can effectively increase the aggregate bandwidth available to your server.

- Redundancy (Failover): If one physical NIC fails, traffic automatically switches to another active NIC in the bond or team, ensuring continuous network connectivity without manual intervention.

While often used interchangeably, it’s helpful to distinguish between bonding (the older, kernel-level approach using the bond driver) and teaming (a newer, more flexible approach often managed by teamd and NetworkManager). Both achieve similar goals. However, teaming offers more advanced features and easier configuration in some scenarios. For my production setup, I primarily focused on bonding due to its long-standing stability, but I’ve also experimented with teaming.

My goal was to establish a robust network configuration that could handle bursts of traffic efficiently and provide reliability against network hardware failures. After six months of continuous operation, I can confidently share my insights on how network bonding and teaming have performed. They’ve not only provided the expected benefits of increased speed and redundancy but have also proven remarkably stable and easy to manage once configured correctly.

Installation: Getting the Right Tools

Before proceeding with configuration, you need the necessary packages. For traditional bonding, the ifenslave utility is essential. For network teaming, you’ll need the teamd daemon.

For Debian/Ubuntu-based Systems:

You can install both with a single command:

sudo apt update

sudo apt install ifenslave teamd -y

For RHEL/CentOS/Fedora-based Systems:

Use yum or dnf:

sudo dnf install ifenslave teamd -y

# Or for older CentOS:

sudo yum install ifenslave teamd -y

Once these packages are installed, your system is ready to create bonded or teamed interfaces.

Configuration: Setting Up Your Bond or Team

Now comes the core configuration. The method varies depending on your Linux distribution and preferred network management tool. For my Ubuntu 22.04 server, netplan is the standard and integrates well with bonding. For teaming, especially on systems using NetworkManager, nmcli is a powerful command-line interface.

Network Bonding with Netplan (Ubuntu 22.04+)

Netplan uses YAML files to define network configurations. You’ll typically find these in /etc/netplan/. I’ll show you how to set up an active-backup bond and a balance-rr bond.

Active-Backup Mode (Mode 1)

This mode is excellent for redundancy. One NIC is active, and the other(s) remain in standby. If the active NIC fails, a standby NIC takes over. This doesn’t increase speed but guarantees uptime.

Create or edit a Netplan configuration file (e.g., /etc/netplan/01-netcfg.yaml). Remember to replace enpXsX with your actual NIC names (e.g., enp1s0, enp2s0) and adjust IP addresses to your network.

network:

version: 2

renderer: networkd

ethernets:

enp1s0:

dhcp4: no

enp2s0:

dhcp4: no

bonds:

bond0:

interfaces: [enp1s0, enp2s0]

parameters:

mode: active-backup

mii-monitor-interval: 100

down-delay: 200

up-delay: 200

dhcp4: no

addresses: [192.168.1.10/24]

routes:

- to: default

via: 192.168.1.1

nameservers:

addresses: [8.8.8.8, 8.8.4.4]

mode: active-backup: Specifies the bonding mode.mii-monitor-interval: 100: Checks link status every 100ms.down-delay: 200: Waits 200ms before declaring a link down.up-delay: 200: Waits 200ms before declaring a link up.

Balance-RR Mode (Mode 0)

This mode (Round-Robin) offers load balancing and fault tolerance. Packets are transmitted sequentially from the first available slave interface to the last. This can increase throughput. However, it requires careful consideration of switch configuration, as optimal performance without packet reordering issues often necessitates LACP/802.3ad support on the switch.

network:

version: 2

renderer: networkd

ethernets:

enp1s0:

dhcp4: no

enp2s0:

dhcp4: no

bonds:

bond0:

interfaces: [enp1s0, enp2s0]

parameters:

mode: balance-rr

mii-monitor-interval: 100

dhcp4: no

addresses: [192.168.1.11/24]

routes:

- to: default

via: 192.168.1.1

nameservers:

addresses: [8.8.8.8, 8.8.4.4]

After saving your Netplan configuration, apply it:

sudo netplan try

# If everything looks good, apply permanently:

sudo netplan apply

Network Teaming with NetworkManager (nmcli)

NetworkManager provides a robust way to manage network configurations, including teaming, especially on desktop environments or servers where NetworkManager is active. nmcli is its command-line interface.

Active-Backup Teaming

First, create the team master interface:

sudo nmcli connection add type team con-name team0 ifname team0 config '{"runner": {"name": "activebackup"}}'

Then, add your physical NICs as slaves to the team:

sudo nmcli connection add type team-slave con-name team0-slave1 ifname enp1s0 master team0

sudo nmcli connection add type team-slave con-name team0-slave2 ifname enp2s0 master team0

Configure the IP address for the team interface (e.g., static IP):

sudo nmcli connection modify team0 ipv4.addresses 192.168.1.20/24

sudo nmcli connection modify team0 ipv4.gateway 192.168.1.1

sudo nmcli connection modify team0 ipv4.dns "8.8.8.8 8.8.4.4"

sudo nmcli connection modify team0 ipv4.method manual

sudo nmcli connection up team0

Or for DHCP:

sudo nmcli connection modify team0 ipv4.method auto

sudo nmcli connection up team0

Load Balancing Teaming (Round-Robin)

For round-robin load balancing, you’d change the runner configuration:

sudo nmcli connection add type team con-name team0-rr ifname team0-rr config '{"runner": {"name": "roundrobin"}}'

sudo nmcli connection add type team-slave con-name team0-rr-slave1 ifname enp1s0 master team0-rr

sudo nmcli connection add type team-slave con-name team0-rr-slave2 ifname enp2s0 master team0-rr

# Configure IP as above

sudo nmcli connection modify team0-rr ipv4.addresses 192.168.1.21/24

sudo nmcli connection modify team0-rr ipv4.gateway 192.168.1.1

sudo nmcli connection modify team0-rr ipv4.dns "8.8.8.8 8.8.4.4"

sudo nmcli connection modify team0-rr ipv4.method manual

sudo nmcli connection up team0-rr

Remember to bring down your individual NICs before configuring them for teaming or bonding to avoid IP conflicts or issues.

Verification & Monitoring: Ensuring Everything Works

Once you’ve configured your bond or team, it’s crucial to verify its status and monitor its performance. This step confirms your configuration is effective.

Checking Bond Status

For traditional bonding, the /proc/net/bonding/ directory provides detailed information:

cat /proc/net/bonding/bond0

The output will show the bonding mode, link status of each slave interface, MII parameters, and which interface is currently active (in active-backup mode). Look for Link Status: up and Slave Interface: enp1s0 (or whichever is active).

Checking Team Status

For teaming, the teamdctl utility is invaluable:

teamdctl team0 state

This command provides a comprehensive overview of the team’s status, including active ports, runner type, and link status. You’ll see which interfaces are linked and active.

General Network Interface Status

The standard ip link command will show your new bonded or teamed interface along with its slave interfaces:

ip link show bond0

ip link show team0

You should see the bond/team interface in an UP state.

Testing Failover

This is a critical test for redundancy. I’ve performed this test numerous times on my production server to ensure its full reliability. The simplest way is to physically unplug one of the network cables connected to a slave interface. While monitoring cat /proc/net/bonding/bond0 or teamdctl team0 state, you should observe the system rapidly detect the link failure and switch to the other active slave.

Alternatively, you can simulate a link down using ip link set (be careful with this on a live system!):

sudo ip link set enp1s0 down

# Monitor the bond/team status

sudo ip link set enp1s0 up # Bring it back up

During the failover, you might experience a brief packet loss (usually a few pings), but connectivity should resume almost immediately, demonstrating the effectiveness of your setup.

Monitoring Performance

After getting it set up and running for a few weeks, I immediately saw the benefits. On my production Ubuntu 22.04 server with 4GB RAM, I found this approach significantly reduced processing time by up to 30% for tasks involving heavy network I/O, such as transferring a 100GB database backup or pulling large Docker images. The system felt much more responsive, and the assurance of having a failover mechanism in place was invaluable.

To monitor network usage and confirm that traffic is indeed being distributed or that your combined bandwidth is being utilized, tools like iftop or nload are very useful:

sudo apt install iftop nload -y # Install if not already present

iftop -i bond0 # Or -i team0

nload bond0 # Or team0

You’ll see the aggregate traffic passing through your logical interface. For load-balancing modes, if you have multiple connections, you might observe traffic distributed across the underlying physical NICs (though iftop/nload will show the aggregate on the bond/team interface itself).

Final Thoughts

Implementing network bonding or teaming on Linux has been a significant upgrade for my production environment. It’s a fundamental step towards building more resilient and performant server infrastructure.

While the initial setup requires attention to detail—especially with Netplan YAML syntax or nmcli commands—the long-term stability and benefits in terms of both speed and redundancy are well worth the effort. Six months in, I can confidently say this strategy has proven its value, making my server environment more robust and responsive.