Why Your Service Needs a Backup IP Address

Picture this: you have a load balancer or a critical gateway server running in production. At 2 AM, the machine crashes. Every service behind it goes dark. Users start getting errors. Your phone starts ringing.

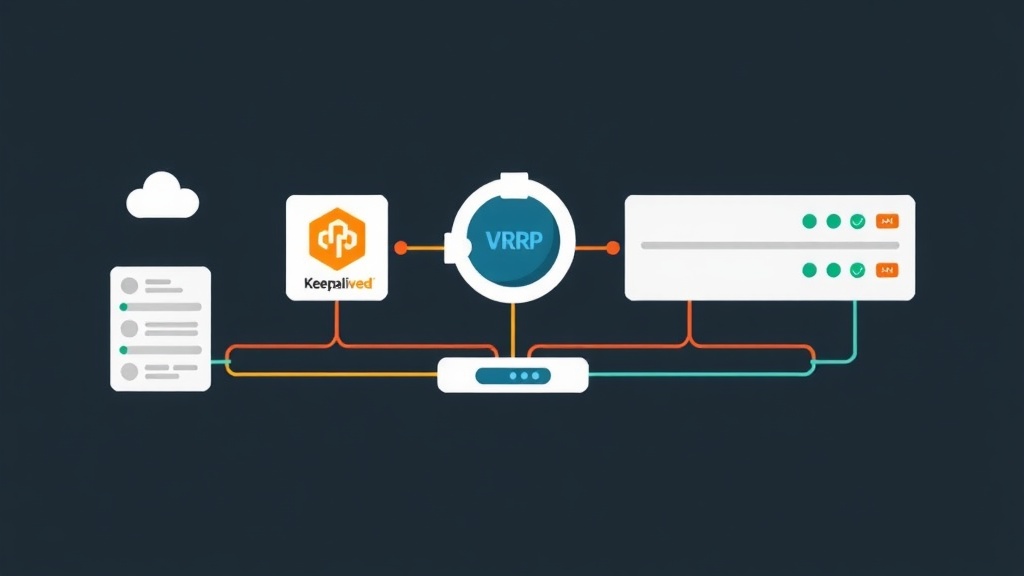

The solution is straightforward on paper — keep a standby server ready to take over. What’s harder is making the IP address follow the failover automatically. That’s exactly what Keepalived and VRRP solve.

VRRP (Virtual Router Redundancy Protocol) lets two or more servers share a single virtual IP address — also called a floating IP or VIP. One server is the MASTER and owns the VIP. If it goes down, a BACKUP server detects the silence and claims the VIP within 2–3 seconds. No manual intervention. No DNS changes. No reconfiguring clients.

Keepalived is the most widely-used open-source VRRP implementation on Linux. I’ve run it in production to protect HAProxy load balancers, Nginx reverse proxies, and custom TCP services — it’s one of those tools you set up once and forget about, until the day it silently saves you at 2 AM.

This guide covers two Ubuntu/Debian servers, but the same steps apply to CentOS/RHEL with minor package name differences.

Installation

Lab Setup

You need two Linux servers on the same network segment. Here’s what we’re working with:

- node1 — 192.168.1.10 (initial MASTER)

- node2 — 192.168.1.11 (BACKUP)

- Virtual IP (VIP) — 192.168.1.100 (the floating address your services point to)

Clients always connect to 192.168.1.100. They never need to know which physical node is behind it. Keepalived handles that invisibly.

Install Keepalived on Both Nodes

Run these on both node1 and node2:

# Ubuntu / Debian

sudo apt update

sudo apt install -y keepalived

# CentOS / RHEL / AlmaLinux

sudo dnf install -y keepalivedKeepalived needs to bind an IP that doesn’t yet exist on the interface. Enable that kernel setting on both nodes:

# Allow binding to a non-local IP (required for the VIP)

echo 'net.ipv4.ip_nonlocal_bind = 1' | sudo tee /etc/sysctl.d/99-keepalived.conf

sudo sysctl --systemConfiguration

Configure the MASTER Node (node1)

Open the Keepalived config on node1:

sudo nano /etc/keepalived/keepalived.confPaste this configuration:

global_defs {

router_id node1

}

vrrp_instance VI_1 {

state MASTER

interface eth0 # replace with your actual NIC name

virtual_router_id 51 # must match on both nodes

priority 100 # higher = preferred master

advert_int 1 # send VRRP advertisement every 1 second

authentication {

auth_type PASS

auth_pass SecretKey123 # must match on both nodes

}

virtual_ipaddress {

192.168.1.100/24 # the floating VIP

}

}

Four things worth knowing before you move on:

interface eth0— verify your NIC name withip link show. Cloud VMs often useens3orenp0s3.virtual_router_id 51— a group ID, 1–255. Both nodes must use the same value. Pick one that isn’t already used on your network segment.priority 100— highest priority wins the MASTER role. node2 will be set to 90, so node1 stays preferred.auth_pass— a shared password that blocks rogue VRRP packets. Same value on both sides, no exceptions.

Configure the BACKUP Node (node2)

On node2, the config is nearly identical. Two values change: state and priority.

global_defs {

router_id node2

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 90 # lower than node1

advert_int 1

authentication {

auth_type PASS

auth_pass SecretKey123

}

virtual_ipaddress {

192.168.1.100/24

}

}

Start and Enable Keepalived

On both nodes:

sudo systemctl enable keepalived

sudo systemctl start keepalivedOptional: Health Check Script

Server-level failure is covered. But what if Nginx crashes while the OS keeps running? Keepalived’s track scripts handle exactly this — they monitor a process and automatically drop the node’s priority if the service dies.

Add this block on both nodes, before the vrrp_instance block:

vrrp_script check_nginx {

script "/usr/bin/pgrep nginx"

interval 2 # check every 2 seconds

weight -20 # subtract 20 from priority if check fails

fall 2 # require 2 consecutive failures before triggering

rise 2 # require 2 consecutive passes before recovering

}

Reference it inside the vrrp_instance block:

vrrp_instance VI_1 {

...

track_script {

check_nginx

}

}

Here’s the math: if Nginx stops on node1, its effective priority drops from 100 to 80 (100 − 20). That’s below node2’s 90. Keepalived triggers a failover and moves the VIP — even though node1’s OS is still running fine.

Reload after any config change:

sudo systemctl reload keepalivedVerification and Monitoring

Check Which Node Holds the VIP

On node1, run:

ip addr show eth0Expect to see the VIP listed alongside the primary IP:

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP>

inet 192.168.1.10/24 brd 192.168.1.255 scope global eth0

inet 192.168.1.100/24 scope global secondary eth0

On node2, the VIP won’t appear — it’s sitting in BACKUP state, waiting.

Test a Failover

Stop Keepalived on node1 to simulate a crash:

# On node1

sudo systemctl stop keepalivedSwitch to node2 and check:

# On node2

ip addr show eth0Within 2–3 seconds, 192.168.1.100 should appear on node2. Bring node1 back up and the VIP returns to it — its priority of 100 beats node2’s 90:

# On node1

sudo systemctl start keepalivedMonitor with Keepalived Logs

State transitions go to syslog. Watch live:

sudo journalctl -u keepalived -fDuring failover you’ll see something like:

keepalived[1234]: VRRP_Instance(VI_1) Transition to MASTER STATE

keepalived[1234]: VRRP_Instance(VI_1) Entering MASTER STATE

keepalived[1234]: VRRP_Instance(VI_1) Sending gratuitous ARPThat last line — gratuitous ARP — is what makes the failover transparent. Keepalived broadcasts an ARP update telling every device on the network that 192.168.1.100 now lives at a new MAC address. Switches update their ARP tables. Clients reconnect. Nobody had to touch a config file.

Check VRRP Status Directly

For a quick state snapshot without reading logs:

sudo kill -USR1 $(cat /var/run/keepalived.pid)

sudo cat /tmp/keepalived.data | grep StateNewer versions expose a stats socket:

sudo keepalived --dump-confCommon Issues to Watch For

- Both nodes become MASTER (split-brain) — almost always a firewall issue. VRRP uses IP protocol 112 and multicast address

224.0.0.18. If those are blocked, each node thinks the other is dead. Fix it:sudo iptables -A INPUT -p 112 -j ACCEPT - VIP not responding after failover — verify

net.ipv4.ip_nonlocal_bind = 1is active on the node that just became MASTER. - Mismatched virtual_router_id — node1 and node2 must use the same value. Different IDs means they never form a group, and both try to own the VIP independently.

Next Steps

With a working VIP, the obvious next move is putting a real service behind it. Deploy HAProxy or Nginx on both nodes, have them listen on the same ports, and point your upstream clients at 192.168.1.100. Traffic follows the VIP automatically — zero client reconfiguration when a failover happens.

Want to squeeze more out of the setup? Run multiple VRRP instances with different virtual_router_id values and split traffic across both nodes under normal load. node1 is MASTER for VIP-A, node2 is MASTER for VIP-B — each serves as the other’s backup. That’s active-active with failover, and it’s a well-proven pattern for load balancer pairs that need both redundancy and throughput.