The Blind Spot of Traditional CPU and Memory Metrics

Five years into managing Kubernetes clusters, I’ve learned that the standard Horizontal Pod Autoscaler (HPA) has a massive blind spot. Most teams start by scaling based on CPU or Memory usage. It sounds logical: if a pod is working hard, add more pods. But in an event-driven world where services consume Kafka messages or process Redis tasks, these metrics often lie about the actual pressure on your system.

This reality hit me hard last year while managing a high-traffic data pipeline. Our CPU usage sat at a cool 12% because the bottleneck was I/O bound, yet our Kafka lag was skyrocketing. By the time the CPU finally spiked enough to trigger the HPA, we were 4.5 million messages behind. The system was reactive, lagging, and failing our users. KEDA (Kubernetes Event-Driven Autoscaling) was the tool that finally solved this headache.

How KEDA Bridges the Gap

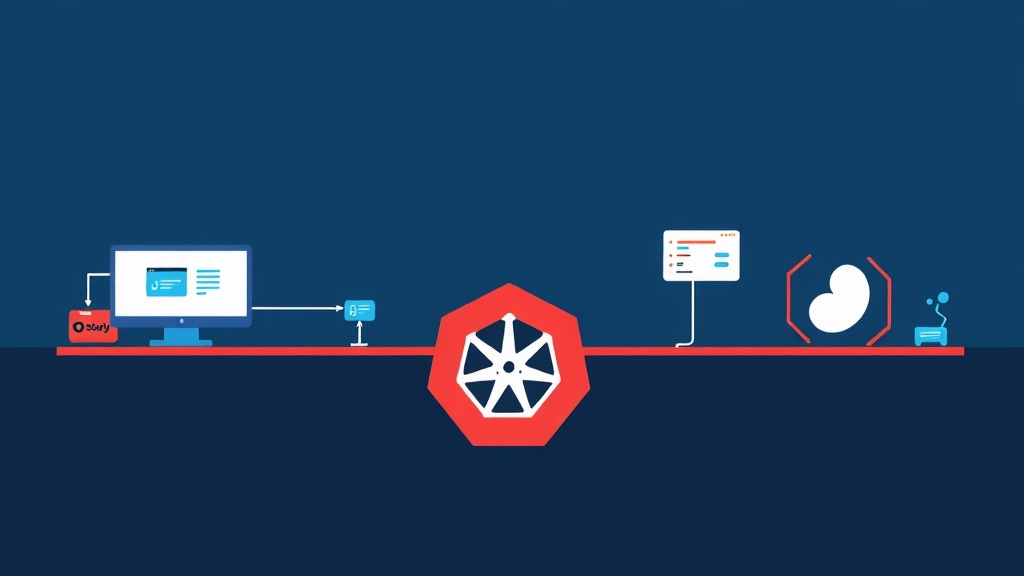

KEDA acts as a lightweight bridge between your Kubernetes cluster and external event sources. It doesn’t replace the HPA; instead, it supercharges it. KEDA allows you to scale workloads directly based on the number of events waiting in a queue or a stream. It provides the custom metrics and triggers needed to communicate with external systems like Kafka, Redis, RabbitMQ, or AWS SQS.

In practice, this is a massive win for reliability. It moves your infrastructure closer to a “serverless” model on top of your existing nodes. You can scale down to zero when there is no work to do, which slashed our staging environment costs by roughly 40%. When a large batch of data hits your broker, KEDA can burst your deployment to hundreds of pods in seconds.

The Core Plumbing

- Scaler: The connector that fetches metrics, such as Kafka lag or Redis list length.

- Metrics Adapter: This component translates external metrics into a format the Kubernetes HPA understands.

- ScaledObject: A Custom Resource Definition (CRD) where you define your scaling logic, including target deployments and thresholds.

Hands-on Implementation

I’ll walk you through the setup we used to stabilize our production environment. We’ll install KEDA and then configure it for two high-traffic scenarios: Kafka and Redis.

1. Installing KEDA

Helm is the easiest way to manage this. I recommend deploying KEDA into its own namespace to keep your management tools separate from your application logic.

# Add the KEDA Helm repo

helm repo add kedacore https://kedacore.github.io/charts

# Update the repo

helm repo update

# Install KEDA

helm install keda kedacore/keda --namespace keda --create-namespace2. Scaling Based on Kafka Lag

Suppose you have a consumer group named order-processor. You want to spin up more pods if the lag—the number of unprocessed messages—exceeds 100 messages per pod. Here is the ScaledObject configuration I used:

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: kafka-scaledobject

namespace: default

spec:

scaleTargetRef:

name: order-consumer-deployment

minReplicaCount: 1

maxReplicaCount: 20

triggers:

- type: kafka

metadata:

bootstrapServers: kafka-cluster-kafka-bootstrap.kafka.svc:9092

consumerGroup: order-processor

topic: orders

lagThreshold: "100"

offsetResetPolicy: latestWith this config, KEDA monitors the lag. If the lag hits 800 messages and you have 1 pod, KEDA tells the HPA to scale up to 8 pods immediately to clear the backlog. This specific change cut our processing time from 45 minutes down to less than 3 minutes during peak flash sales.

3. Scaling Based on Redis List Length

Redis is a fantastic task queue. If you use a Redis List via LPUSH and RPOP, you can scale workers based on the list length. This is perfect for background image processing or PDF generation.

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: redis-scaledobject

namespace: default

spec:

scaleTargetRef:

name: image-worker-deployment

minReplicaCount: 0

maxReplicaCount: 10

triggers:

- type: redis

metadata:

host: redis-master.default.svc.cluster.local

port: "6379"

listName: task_queue

listLength: "20"

type: listThe minReplicaCount: 0 setting is the real star here. If the Redis queue is empty, KEDA deletes all pods. As soon as a single item is pushed to the list, KEDA spins a pod back up. It’s efficient and prevents you from paying for idle compute.

Hard-Won Lessons from Production

After six months of running KEDA, I’ve found that the default 30-second polling interval is often too slow for spiky traffic. We lowered ours to 10 seconds. However, be careful; polling too aggressively can put unnecessary load on your Redis or Kafka metadata APIs.

Don’t ignore cooldown periods. You want to avoid “flapping,” where pods scale up and down in a rapid, jittery loop. I usually set a scaleDown.stabilizationWindowSeconds of 300. This five-minute window ensures that once we scale up, we stay there long enough to handle any immediate follow-up spikes.

Finally, make sure your app handles graceful shutdowns. When KEDA scales down, Kubernetes sends a SIGTERM. If your worker kills a process while halfway through a 10MB Kafka message, you risk data corruption or duplicate processing. Always catch that signal and finish the current task before exiting.

The Bottom Line

Switching from resource-based scaling to event-driven scaling is the logical next step for modern microservices. It allows your infrastructure to respond to the work itself, not just the heat the CPU generates. Whether you are managing Kafka streams or Redis queues, scaling based on lag ensures users aren’t waiting and your cloud bill stays lean. It took us a few weeks to tune our thresholds, but the stability it brought to our production environment was worth every second.